Rethinking LLM Hallucination: It's Not Magic, It's Mathematics

When I first started using Large Language Models (LLMs), they felt like magic. You type in a question, and within seconds, there's a beautifully written answer. It almost felt like the machine was thinking by itself.

Later, I heard someone on a podcast describe LLMs as "friendly machines eager to help." That clicked with me. LLMs are so eager, in fact, that they'll give you something even if they don't really "know" the answer. Sometimes that something is made up. That's what we often call hallucination.

But here's the shift that changed my perspective: LLMs aren't magical companions, and they aren't liars either. They're just functions.

LLMs Are Just Functions

Think of an LLM like a mathematical function:

- You give it an input.

- It processes that input.

- It gives you an output.

Its very nature is to return some output if you give it any input. There's no "deciding" not to answer. That's why it sometimes fabricates — it's just filling the blank with the most likely continuation it can calculate.

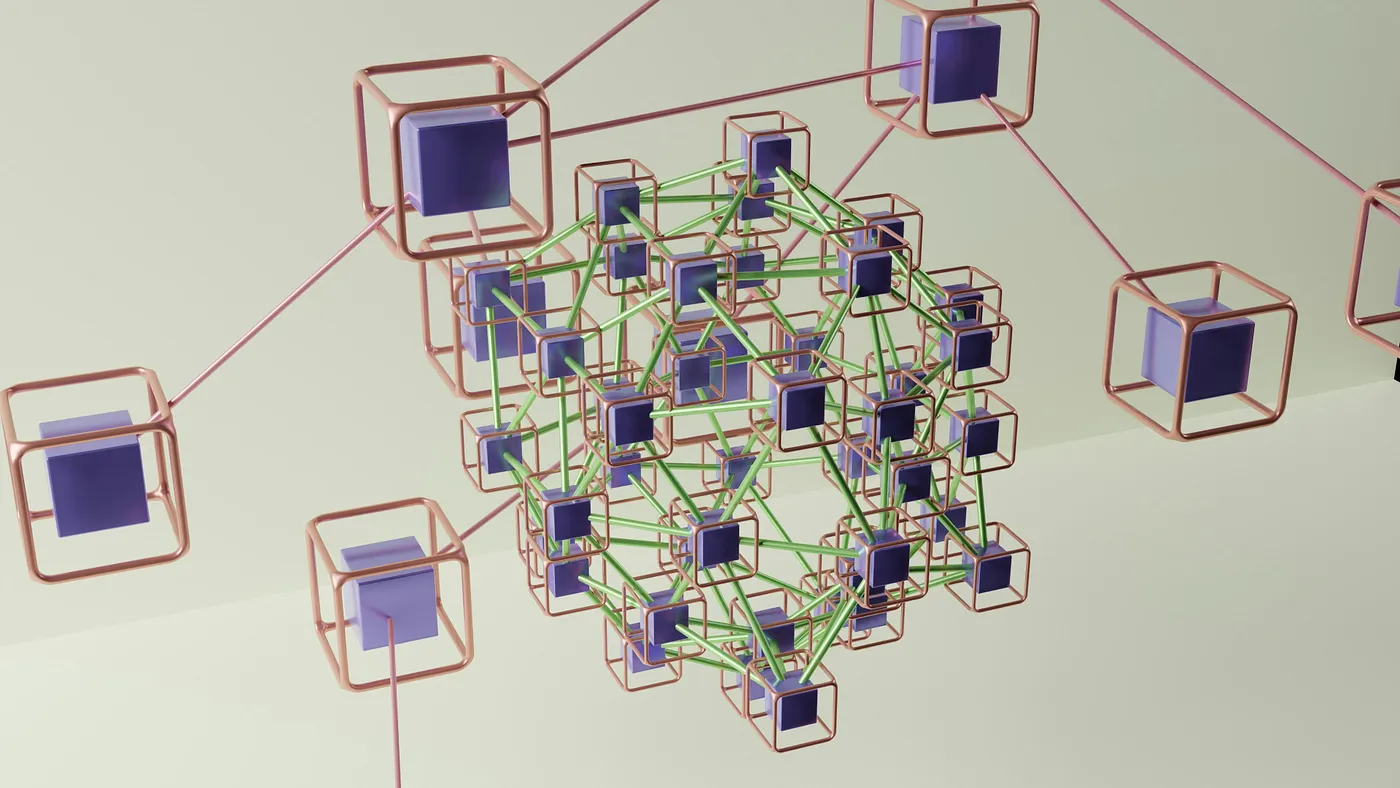

How It Really Works

Under the hood, LLMs are basically giant graphs of nodes and weights. Every concept or word is a node, and the connections between them have different strengths.

- Your prompt activates a set of nodes.

- The model traces the strongest connections.

- The result is built by following those paths.

The more precise your input, the fewer "wild guesses" the model has to make.

Why Specific Prompts Matter

If your prompt is vague, the model has a lot of possible paths to choose from — which increases the chances of a wrong turn. But if your prompt is specific, the model has fewer choices and stays closer to what you actually want.

That's why prompt optimization techniques exist. They help align your input better with the model so the output is less fuzzy. A few examples:

- Chain of Thought prompting → nudging the model to reason step by step.

- Few-shot prompting → showing examples so it learns the style/format you want.

- RAG (Retrieval-Augmented Generation) → pulling in external knowledge to ground the response.

- Instruction tuning → making the model follow directions more strictly.

No need to overcomplicate — each of these is basically about feeding the model better context.

It's Not Really Hallucination

So, is the model truly "hallucinating"? I don't think so. It's just doing its job: generating an output from the input.

Take a simple example: in a viva exam, the teacher asks, "What is a register?"

- If you answer, "A register is a notebook," you're not wrong in general English.

- If you answer, "A register is the smallest unit of storage in a CPU," you're right in computer science.

Both answers are valid — the difference is context. The same applies to LLMs. If your input lacks context, the output might drift.

Final Thoughts

What people call hallucination is often just a context mismatch. LLMs don't imagine, they calculate. And like any function, they'll always give you something back.

Once I understood this, the magic disappeared — but so did the mystery. Now, I see LLMs as powerful tools that become more reliable the better we guide them. The more we optimize our prompts and supply the right context, the more useful their outputs become.

And if this article doesn't make any sense… well, just assume it's my own little hallucination. 😉